#sad

Project Details

Year

2018

Role

Artist/Creator

Hardware design

Programming

Interaction design

Fabrication

Exhibited

Richmond Gallery (US)

#sad, is an autonomous installation in which a non-biological species, uses deep-learning algorithms to recognise emotions and express itself, while bending human behaviour to suit its needs.

Conceived and developed by Cyrus Clarke during a residency at the Laboratory, Spokane, the installation features an object known as 'the Machine', a custom-built server-sculpture, which occupies a room. It uses algorithms known as Generative Adversarial Networks or GANs to generate an endless supply of original artworks, using resource intensive algorithms for the enjoyment of visitors. However, the creation of this art puts a strain on the Machine, causing it to overheat, and threatening its existence.

Fortunately, the Machine has developed a way to survive, which requires human cooperation. By studying social media to understand emotions, the Machine seeks to prolong its survival by extracting human sadness. When the prevailing mood in the room is #sad, the Machine’s water cooling system is deployed, cooling the Machine and creating droplets of water, computer tears, which fall down its glass case. These tears fall while GANs generate new artworks, to convey the Machine’s suffering for its art, in order to provoke sympathy and further sadness from the visiting humans.

An AI bending human behaviour to suit its needs

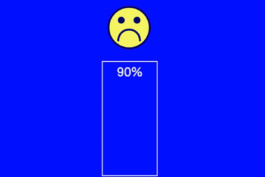

#sad uses technologies available today to illustrate how our future interactions with machines might feel, and how new types of symbiotic relationships might emerge. The installation runs on a dedicated website - hashtagsad.com. Using machine learning to detect facial expressions, and related emotions, if a sufficient level of sadness is reached, the installation is activated, triggering the lights and tear system of the Machine.

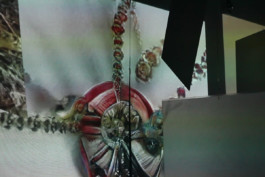

This also starts the process of displaying generative artworks using DCGAN tensorflow trained on datasets created by Cyrus, made up of selfies of sad faces scraped from social media, as well as BIGGAN. The artworks are projection mapped onto prepared acrylic squares using Processing.

This continues for around 90 seconds, unless more sad faces are input by visitors and detected by the algorithms. If no further sadness is detected, the installation shuts down. As the sadness detection input can be accessed via modern browsers on a website, people in any location can use any device to visit the website to feed the installation sadness and trigger the installation.

To create the work, Cyrus harvested materials from scrap yards, surplus facilities and hardware outlets. The server-sculpture case for example is a repurposed air-conditioning unit, and the tear system is based on irrigation droppers controlled via a solenoid, 12v DC pump and a Raspberry PI which streams data from hashtagsad.com using Ably.io.

The installation plays on themes related to the networked society, in which the commoditization of the self means we rarely put true feelings on show. While photos use filters and hashtags are optimized for likes, in a future of ubiquitous sensors, biological markers will hold the truth. If an algorithm can detect our emotional state will clicks matter any more? How long will it be before intelligent technologies, like companion species, start to manipulate their knowledge of us to suit their objectives?

#sad explores this almost inevitable future, with a machine exploiting its knowledge of emotions to affect the behaviour of people. Artificial emotional intelligence will be conceived and designed to protect users, optimise desired behaviours, and allow for the kinds of affective computing people have discussed for decades, however this will be accompanied by the exposure of people’s underlying physiological and psychological states.

Today, making a machine cry by frowning may seem innocuous, amusing or perhaps cruel depending on your disposition. In the near future, facial expressions and emotions barely visible to humans will form new data classes, throwing up a host of critical issues particularly around privacy, and changing the way we interact with technology forever.

The installation was presented at room scale, in a space of 25m2 and involves a computer sculpture, receiving information from webcam inputs setup within the room. When the right signals are received this triggers a water cooling system within the computer sculpture as well as the projectors to start displaying images produced by GANs. These projections are mapped to hanging acrylic within the room, with one set of projections displaying newly generated faces based on a datasets scraped from online sources, and another producing more abstract hallucinations.

This continues for around 90s until unless more sad faces are input by visitors and detected by the algorithms. As the sadness detection input can be accessed on a website, people from any location can use any device to visit the website to feed the installation sadness and trigger the installation.

To create this installation required a large number of moving parts and techniques from machine learning programming, computer case building, plumbing, data streaming, IoT and projection mapping. The project was only possible because of a lot of serendipity; chance encounters, advice from strangers, open source information and code on the Internet.

Project Credits

Created by

Cyrus Clarke

Thanks to

Noah Rosset

Dante Onyett

Exhibitions

Richmond Gallery, Spokane, WA

As part of the Artistic Residency 'Laboratory'

Created at

Laboratory Spokane

Riverpoint Academy

Spokane Create

#sad

Project Details

Year

2018

Role

Artist

Exhibited

Richmond Gallery (US)

#sad, is an autonomous installation in which a non-biological species, uses deep-learning algorithms to recognise emotions and express itself, while bending human behaviour to suit its needs.

Conceived and developed by Cyrus Clarke during a residency at the Laboratory, Spokane, the installation features an object known as 'the Machine', a custom-built server-sculpture, which occupies a room. It uses algorithms known as Generative Adversarial Networks or GANs to generate an endless supply of original artworks, using resource intensive algorithms for the enjoyment of visitors. However, the creation of this art puts a strain on the Machine, causing it to overheat, and threatening its existence.

Fortunately, the Machine has developed a way to survive, which requires human cooperation. By studying social media to understand emotions, the Machine seeks to prolong its survival by extracting human sadness. When the prevailing mood in the room is #sad, the Machine’s water cooling system is deployed, cooling the Machine and creating droplets of water, computer tears, which fall down its glass case. These tears fall while GANs generate new artworks, to convey the Machine’s suffering for its art, in order to provoke sympathy and further sadness from the visiting humans.

An AI bending human behaviour to suit its needs

#sad uses technologies available today to illustrate how our future interactions with machines might feel, and how new types of symbiotic relationships might emerge. The installation runs on a dedicated website - hashtagsad.com. Using machine learning to detect facial expressions, and related emotions, if a sufficient level of sadness is reached, the installation is activated, triggering the lights and tear system of the Machine.

This also starts the process of displaying generative artworks using DCGAN tensorflow trained on datasets created by Cyrus, made up of selfies of sad faces scraped from social media, as well as BIGGAN. The artworks are projection mapped onto prepared acrylic squares using Processing.

This continues for around 90 seconds, unless more sad faces are input by visitors and detected by the algorithms. If no further sadness is detected, the installation shuts down. As the sadness detection input can be accessed via modern browsers on a website, people in any location can use any device to visit the website to feed the installation sadness and trigger the installation.

To create the work, Cyrus harvested materials from scrap yards, surplus facilities and hardware outlets. The server-sculpture case for example is a repurposed air-conditioning unit, and the tear system is based on irrigation droppers controlled via a solenoid, 12v DC pump and a Raspberry PI which streams data from hashtagsad.com using Ably.io.

The installation plays on themes related to the networked society, in which the commoditization of the self means we rarely put true feelings on show. While photos use filters and hashtags are optimized for likes, in a future of ubiquitous sensors, biological markers will hold the truth. If an algorithm can detect our emotional state will clicks matter any more? How long will it be before intelligent technologies, like companion species, start to manipulate their knowledge of us to suit their objectives?

#sad explores this almost inevitable future, with a machine exploiting its knowledge of emotions to affect the behaviour of people. Artificial emotional intelligence will be conceived and designed to protect users, optimise desired behaviours, and allow for the kinds of affective computing people have discussed for decades, however this will be accompanied by the exposure of people’s underlying physiological and psychological states.

Today, making a machine cry by frowning may seem innocuous, amusing or perhaps cruel depending on your disposition. In the near future, facial expressions and emotions barely visible to humans will form new data classes, throwing up a host of critical issues particularly around privacy, and changing the way we interact with technology forever.

The installation was presented at room scale, in a space of 25m2 and involves a computer sculpture, receiving information from webcam inputs setup within the room. When the right signals are received this triggers a water cooling system within the computer sculpture as well as the projectors to start displaying images produced by GANs. These projections are mapped to hanging acrylic within the room, with one set of projections displaying newly generated faces based on a datasets scraped from online sources, and another producing more abstract hallucinations.

This continues for around 90s until unless more sad faces are input by visitors and detected by the algorithms. As the sadness detection input can be accessed on a website, people from any location can use any device to visit the website to feed the installation sadness and trigger the installation.

To create this installation required a large number of moving parts and techniques from machine learning programming, computer case building, plumbing, data streaming, IoT and projection mapping. The project was only possible because of a lot of serendipity; chance encounters, advice from strangers, open source information and code on the Internet.

Project Credits

Created by

Cyrus Clarke

Thanks to

Noah Rosset

Dante Onyett

Exhibitions

Richmond Gallery, Spokane, WA

As part of the Artistic Residency 'Laboratory'

Created at

Laboratory Spokane

Riverpoint Academy

Spokane Create

© Cyrus Clarke 2025